Using LLM in GenAI Projects - Part 2 (Pipeline)

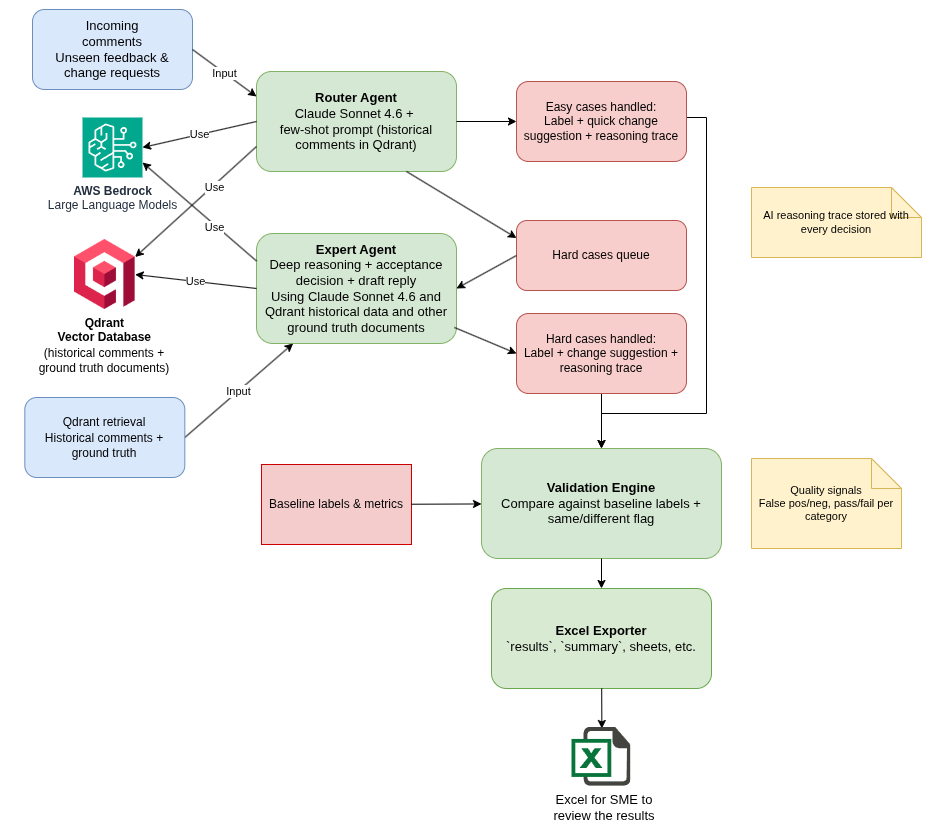

A generative AI pipeline.

Introduction

In Part 1, I described how I prepared and ingested historical comments and ground truth documents into Qdrant.

In this second part, I focus on the LLM stage: how the system classifies unseen comments, applies expert-level reasoning for complex cases, validates quality, and exports results for SME review.

From Ingestion to LLM Workflow

After ingestion, the pipeline runs in this order:

- Router Agent classifies comments to various categories (easy cases / hard cases). Router Agent handles easy cases.

- Expert Agent handles all hard cases more thoroughly, and also makes a decision draft whether the comment is accepted vs rejected.

- Validation compares generated categories to baseline labels.

- Export converts final JSON output into an Excel file for SME to review the results.

Router Agent: Fast First Pass

The Router Agent is a lightweight gatekeeper.

It uses an LLM prompt plus few-shot examples from historical comments (in Qdrant vector database) to classify each test comment quickly into practical routing buckets. The goal is speed and stable structure, not deep scientific judgment yet.

In this project, the router uses Claude Sonnet 4.6 (hosted in AWS Bedrock) and also writes a short AI reasoning trace for each decision. For easy cases, it can already produce a first draft change suggestion, so the downstream review output is not just a label.

Expert Agent: Deep Reasoning for Hard Cases

Hard cases are the most demanding. They require grounding against the ground truth main document text and other references.

For those comments, the Expert Agent retrieves context from Qdrant and decides:

- accepted content suggestion

- rejected suggestion with rationale

At this stage, the same Sonnet model produces richer outputs for SME review: updated AI reasoning, a draft reply to the commenter, and a concrete draft change proposal. This gives the reviewer both the decision and the model’s justification path.

Validation and SME-Friendly Output

Once results are generated, validation measures how well model predictions match the baseline categories.

After validation, results are exported to Excel as a practical review package for SMEs, not just as a raw data dump.

The export includes three sheets:

results: row-level model output side by side with baseline labels, including an explicit SAME/DIFFERENT comparison flag.summary: automatically calculated category-level metrics (target rates, false positives, false negatives, actual success rates, pass/fail).results-explanation: a compact guide explaining each field and how to interpret the metrics.

The workbook is also formatted for fast manual review: mismatches are highlighted, key AI output columns are emphasized, and long text is wrapped for readability.

An important implementation detail is a strict structured output: both agent stages produce JSON outputs that are easier to validate and analyze systematically.

Practical Lessons from Part 2

A few practical takeaways:

- Split responsibilities between agents: fast routing first, deep analysis only where needed.

- Keep outputs strictly structured (JSON).

- Use retrieval context for difficult categories instead of relying on model memory.

- Always include a human-readable export step for domain expert review.

Special Thanks

I want to thank my AI mentor Tuomas Jokela for providing excellent requirement analysis for this project. It was really easy to implement the generative AI pipeline based on the clear and structured requirements provided. This made the development process smooth and efficient, allowing me to focus on building the solution rather than clarifying what needed to be built.

Conclusion

Part 1 made the data retrievable. Part 2 made it actionable.

This two-step approach (ingestion first, then LLM orchestration) has worked well in this POC: it keeps the pipeline understandable, measurable, and easier to improve iteratively.

The writer is working at a major international IT corporation building cloud infrastructures and implementing genAI applications on top of those infrastructures.

Kari Marttila

Kari Marttila’s Home Page in LinkedIn: https://www.linkedin.com/in/karimarttila/